The fraud attack your security stack was never built to stop is already in your customers’ hands. Here’s what fraud managers, security leaders, and risk officers need to know — and what to do about it.

Attackers no longer need to build convincing fakes. AI does it for them — instantly, at scale, indistinguishable from the real thing.

AI Brand impersonation via SMS has become one of the fastest-growing fraud threats facing banks, fintechs, and enterprises. The messages look exactly right. The tone matches. The sender appears legitimate. Your customers have no reliable way to tell the difference — and neither do your existing security controls.

The damage isn’t just financial. It’s structural. By the time your internal systems flag unusual activity, your customer has already received the message, trusted it, and acted on it. The attack is over before your stack knows it started.

This post explains how AI-powered brand impersonation works, why it bypasses every layer of your current security investment, and what organizations can do to stop it before customers are harmed.

The Fakes No Longer Look Fake

For years, a fraudulent text had tells. The logo was slightly off. The grammar was wrong. The urgency felt cartoonish. Customers learned to spot those signals. Organizations leaned on that awareness as a first line of defense.

AI has eliminated every one of them.

Grammar is now perfect. Tone matches your brand’s communications exactly. A fraudulent message arrives in the same thread as your legitimate alerts — written as if your own team sent it. RCS closes the visual gap that plain SMS once left open, adding your verified logo, branded sender ID, and clickable buttons identical to your real alerts. What used to look like a fake no longer does.

Brand impersonation scams built on that trust are now the fastest-growing category of SMS fraud — and they scale. AI-powered campaigns reach thousands of customers simultaneously, generating variants in real time before security teams can respond.

The question is no longer whether your customers can spot a fake. The question is whether they have a way to verify before they act.

RCS — Rich Communication Services — has closed the visual gap that SMS fraud once left open. Where a plain text could only carry words, an RCS message arrives with:

- A verified logo

- A branded sender ID

- High-resolution formatting

- Clickable buttons identical to your legitimate alerts

Your customer isn’t reading a suspicious-looking text. They’re looking at something that appears, visually and functionally, to be an official communication from a brand they trust. That’s what makes brand impersonation scams so difficult to defend against with existing tools.

AI-powered campaigns can reach thousands of customers simultaneously in the time it used to take to draft a single fraudulent message.

This is not a faster version of the old threat. It is a structurally different one. The question is no longer whether your customers can spot a fake — they can’t, not reliably, not anymore. The question is whether they have a way to verify before they act.

The old model of SMS fraud was a numbers game. Attackers sent millions of generic texts — “Your Chase account has been suspended” — and played the odds that enough recipients banked there. It was blunt, impersonal, and relatively easy to warn customers about.

AI has replaced that model entirely.

Fraudsters are now using AI agents to scour leaked databases, public records, and social media to compile targeted customer lists for specific institutions. A regional bank in Ohio. A credit union in Arizona. A community bank your customers have trusted for thirty years. The attack is no longer random — it is researched.

The messages that follow are personalized. They reference the right bank by name. They address the customer by their actual first name. They arrive in a format that matches the institution’s real communications. For the customer receiving it, there is no generic tell. It reads like a message your bank actually sent.

The targeting of smaller institutions is not coincidental. It is strategic.

Large national banks have brand recognition, fraud operations teams, and years of public awareness campaigns behind them. Regional and community banks have something more valuable to an attacker: deep customer trust. When a customer receives a text that appears to be from their local bank — the one where they know the branch manager — they are more likely to act on it without questioning it.

More than 70% of credit unions and regional and community banks reported an increase in fraud in 2025 — a higher rate than large banks or fintechs, and up sharply from 52% the year before. (Alloy State of Fraud Report, 2025)

The increase is not coincidental. These institutions are being selected deliberately, because their customers are more trusting and their fraud defenses are perceived as thinner.

The scale compounds the problem. What used to require a criminal organization to execute can now be launched by a single bad actor with an AI tool and a leaked customer list — in minutes, across thousands of customers, before your fraud team sees a single signal.

When Your Customer Gets Scammed, They Blame You

This is the part that doesn’t show up in threat intelligence reports.

When a customer loses money to a text message that appeared to come from your bank — same sender name, same urgent tone, same instruction to verify their account immediately — they do not blame the fraudster. They blame you.

They trusted your brand. Your name was on the message. The experience of being defrauded is inseparable in their mind from their relationship with your institution. The fact that a criminal sent the message is a legal distinction your customer is not making at that moment. What they know is that they got a text from their bank, they did what it said, and their money is gone.

The downstream consequences are immediate — customer complaints, reimbursement claims, and in many cases, account closure. The reputational damage spreads faster than your fraud team can respond.

The data reflects how serious this has become. The FTC reported $470 million in consumer losses from text scams in 2024 — five times the 2020 figure — and acknowledged that number represents only a fraction of actual harm, since the vast majority of fraud is never reported. At the institutional level, Barclays documented a 40% surge in SMS-originated scam claims in 2025, noting that scammers were pivoting to text precisely because consumer confidence in online channels had declined — making trusted institutions like banks the most effective vehicle for deception.

When your customer is defrauded by a message carrying your name, their first call is to you. Their first expectation is that you will make them whole. And their first instinct, if you can’t — or won’t — is to tell everyone they know.

Regulatory Pressure Is Shifting Liability Back to the Bank

For years, the standard regulatory position on authorized push payment fraud — where a customer is tricked into approving a payment — was that the bank bore limited liability. The customer authorized the transaction. The bank processed it correctly. Case closed.

That position is eroding.

Regulators in the US and UK are moving toward frameworks that hold financial institutions accountable when they failed to take proactive steps to protect customers from impersonation fraud. The question regulators are now asking is not just whether the transaction was authorized — it is whether the institution had reasonable protections in place to prevent the customer from being deceived into authorizing it.

The CFPB has signaled increased scrutiny of how banks respond to impersonation fraud complaints. The OCC’s guidance on operational risk expects institutions to identify and mitigate emerging threats to customers — not just to internal systems. And state consumer protection agencies are watching how banks handle the surge in SMS-based impersonation claims.

The practical implication is straightforward: reactive reimbursement is no longer a sufficient answer. Banks that cannot demonstrate proactive consumer protection measures at the messaging layer are increasingly exposed — both to regulatory action and to the reputational cost of being seen as having done nothing while their customers were targeted.

The institutions best positioned in this environment are those that can show examiners a documented, active intervention at the point where the fraud actually occurs — in the message, before the customer acts.

The Protection Gap Lives Outside Your Perimeter

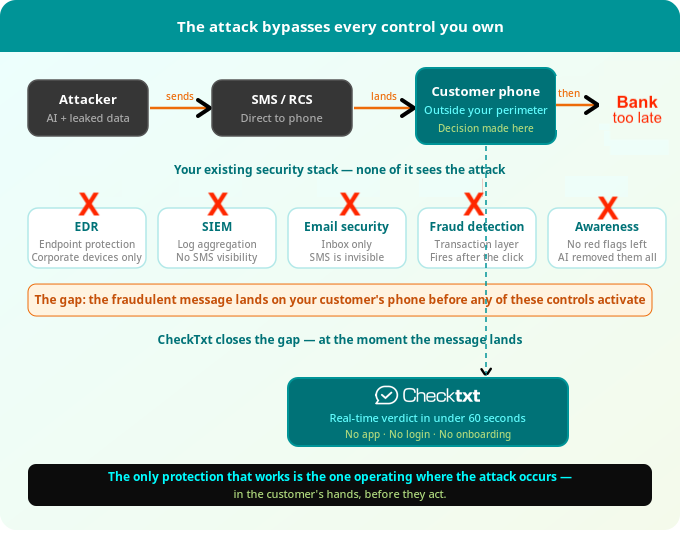

Here is the structural problem that no existing security investment solves.

Your EDR, SIEM, email security, endpoint protection, and fraud detection systems are all genuinely effective at what they were built to do. They protect your systems, your network, your employees’ devices, and your internal transaction layer. Years of investment have made enterprise security stacks more capable than ever.

But the fraudulent text message targeting your customer never enters your perimeter. It is sent directly to their personal phone by an attacker operating entirely outside your visibility. No endpoint agent sees it. No email gateway inspects it. No SIEM log captures it. No transaction monitoring flags it — because at the moment the message arrives, no transaction has occurred yet.

By the time your customer clicks the link, calls the fake number, or approves the transfer — by the time any signal reaches your internal systems — the fraud has already been initiated. Your security stack wasn’t built to stop this. Not because it’s inadequate. Because it was never designed to operate in the channel where this attack occurs.

The coverage gap is not a failure of execution. It is a failure of geography. The attack happens outside your walls, in a channel your tools were never intended to reach, at a moment when your customer is alone with a message and thirty seconds to decide whether to trust it.

That is precisely where protection needs to operate.

Closing the Gap at the Moment It Matters

Closing this gap requires protection that operates where the attack happens — in the hands of your customer, at the moment they decide whether to trust the message.

That is what CheckTxt is built to do.

When a customer receives a suspicious text, they forward it or submit a screenshot. CheckTxt’s CHAI engine analyzes it in real time — message content, brand impersonation signals, URL risk, sender behavior, and campaign-level patterns — and returns a clear verdict in under 60 seconds. No app download. No login. No onboarding friction that reduces adoption.

The customer gets an immediate answer before they act. The bank gets something it has never had before: threat intelligence from the SMS layer — real-time visibility into which customers are being targeted, by what campaign, at what scale. When multiple customers submit variations of the same attack, CheckTxt surfaces the pattern. Banks can issue proactive advisories during live campaigns, before losses accumulate.

This is SMS fraud protection for banks that operates at the right layer — not inside the enterprise boundary, but at the boundary between your brand and your customer’s trust.

Three things happen simultaneously that no existing control delivers:

- The customer gets a verdict before they act

- The bank gets visibility into an attack while it is still forming

- The fraud chain is interrupted before it reaches your systems

The attack is already in your customers’ hands. CheckTxt is the only control positioned to stop it there — at the moment it matters, before the click, before the transfer, before the call to your fraud team asking what happened.

Learn more about how SMS fraud detection works or schedule a demo to see CheckTxt in action.

References

- Federal Trade Commission. (2025, April). Top text scams of 2024. FTC Data Spotlight. https://www.ftc.gov/news-events/data-visualizations/data-spotlight/2025/04/top-text-scams-2024

- Barclays. (2025, December). Barclays exposes the defining scam trends of 2025. Barclays Press Releases. https://home.barclays/news/press-releases/2025/12/barclays-exposes-the-defining-scam-trends-of-2025/

- Wharton, N. (2026, March 6). With AI’s help, fraudsters are targeting smaller banks. U.S. News & World Report. https://www.usnews.com/banking/articles/with-ais-help-fraudsters-are-targeting-smaller-banks

- Alloy. (2025). State of Fraud Report 2025. As cited in U.S. News & World Report, March 2026.

Report Suspicious Messages to CheckTxt

The best way to understand what CheckTxt does is to try it. If you receive a suspicious text message, or if your customers are currently being targeted by an impersonation campaign.

- Forward a text or send a screenshot → 442-432-5898

- Forward an email or send a screenshot → [email protected]

Receive a fraud verdict in under 60 seconds.

Frequently Asked Questions

What is AI brand impersonation fraud?

AI brand impersonation fraud is when attackers use artificial intelligence to generate SMS or RCS text messages that precisely mimic a bank’s tone, branding, and communication style. Unlike older scam texts — which contained obvious errors and generic language — AI-generated messages are indistinguishable from real bank alerts, making them significantly harder for customers to detect. Learn how CheckTxt detects AI-generated impersonation in real time.

How do scammers fake a real company's name in a text message?

Attackers use a technique called sender ID spoofing, which allows them to replace a phone number with any name or string of text — including your bank’s actual name. On standard SMS, this is straightforward to execute. On RCS, fraudsters go further, creating verified-looking sender profiles complete with logos and branded formatting that mirror the real institution’s communications. The message looks like it came from your bank because it was deliberately designed to.

Why do scammers use text messages instead of email?

SMS and RCS bypass virtually every enterprise security control. Email passes through spam filters, gateway scanners, and security tools before it reaches a recipient. A text message goes directly to a personal phone with no inspection layer.

Open rates for SMS are dramatically higher than email, and people respond to texts faster and with less scrutiny. For attackers, SMS is the path of least resistance directly to your customer — and it operates entirely outside your security perimeter. See how the attack chain works.

What is RCS and why does it make SMS fraud more dangerous?

RCS (Rich Communication Services) is the next-generation successor to SMS, supported natively on most modern Android devices and now on iPhone. It allows senders to display verified logos, branded sender names, formatted messages, and interactive buttons — the same visual features that make legitimate bank alerts look trustworthy. Fraudsters exploiting RCS can now send messages that look visually identical to your real communications.

Will current security systems protect me against SMS threats?

Traditional security tools are good at protecting internal systems, but they often lose visibility once fraud reaches customers and employees through everyday texts and emails. Closing that gap requires a different approach—one that addresses enterprise messaging fraud at the point messages are received, not after damage has already occurred.

By analyzing suspicious messages and identifying patterns across text and email, organizations gain earlier insight into how fraud campaigns form and spread. This allows teams to act sooner, reduce losses, ease the burden on security and support teams, and prevent isolated incidents from escalating into larger enterprise risks.